Instruct Vid2Vid

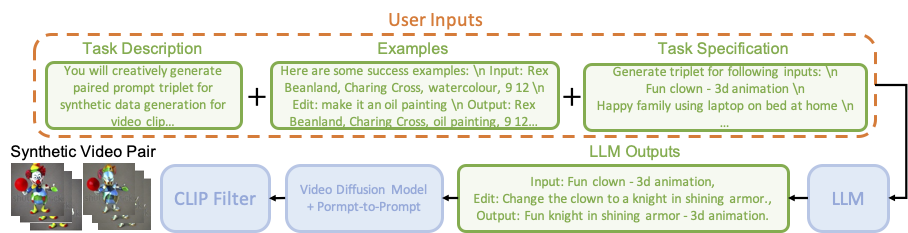

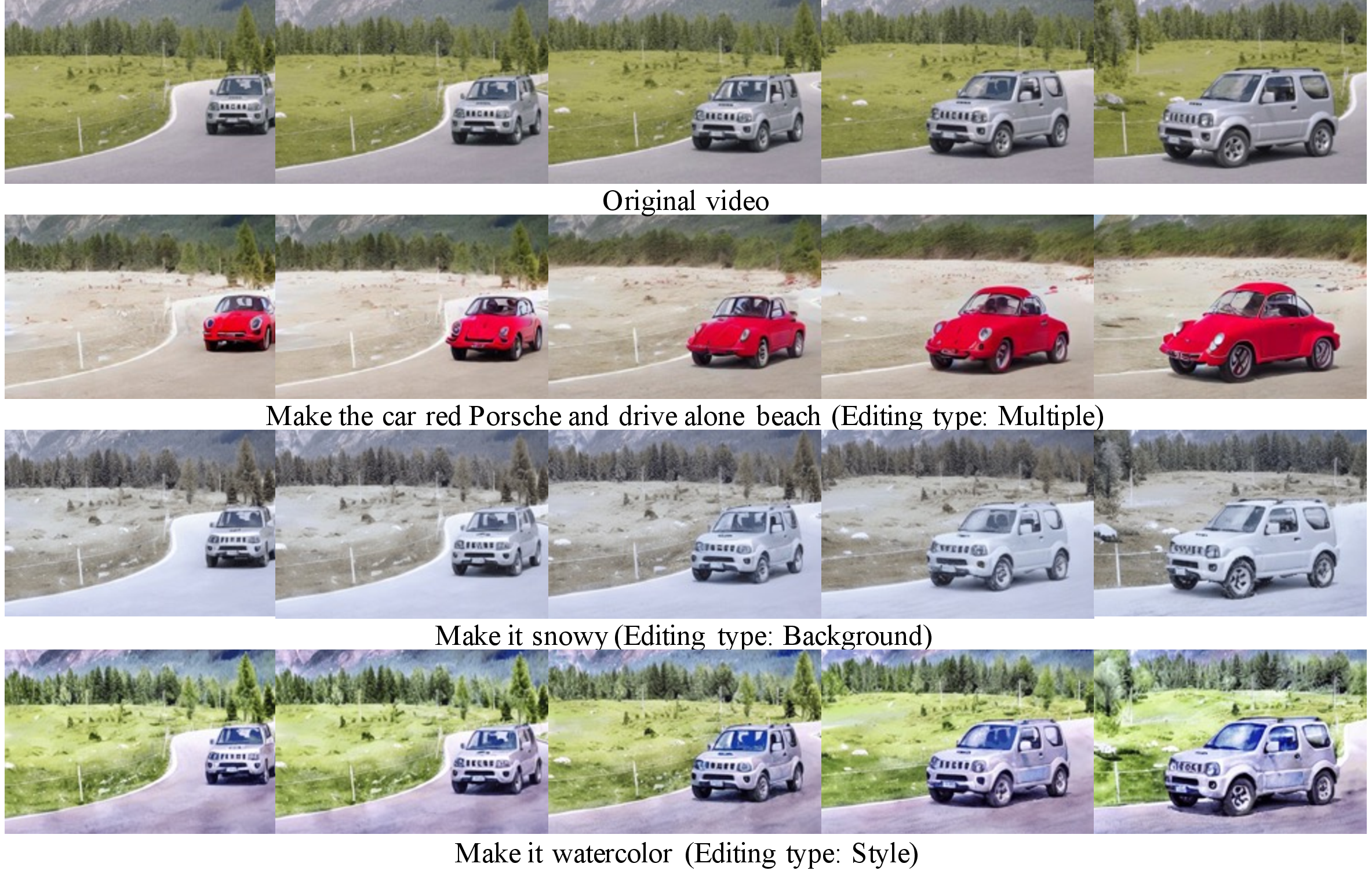

We introduce a novel and efficient approach for text-based video-to-video editing that eliminates the need for resource-intensive per-video-per-model finetuning. At the core of our approach is a synthetic paired video dataset tailored for video-to-video transfer tasks. Inspired by Instruct Pix2Pix’s image transfer via editing instruction, we adapt this paradigm to the video domain. Extending the Prompt-to-Prompt to videos, we efficiently generate paired samples, each with an input video and its edited counterpart. Alongside this, we introduce the Long Video Sampling Correction during sampling, ensuring consistent long videos across batches. Our method surpasses current methods like Tune-A-Video, heralding substantial progress in text-based video-to-video editing and suggesting exciting avenues for further exploration and deployment.

- 2023/11/29: We have updated paper with more comparison to recent baseline methods and updated the comparison video.

Our method requires only an input video and an editing prompt to modify the video. There is no need to fine-tune the model on each video. Left: Input videos. Right: Edited videos.

| Tune-A-Video | Control Video | Vid2Vid Zero | Video P2P |

| TokenFlow | Render A Video | Pix2Video |

@article{insv2v,

title={Consistent Video-to-Video Transfer Using Synthetic Dataset},

author={Jiaxin Cheng, Tianjun Xiao, Tong He},

year={2023},

}